Signal without direction

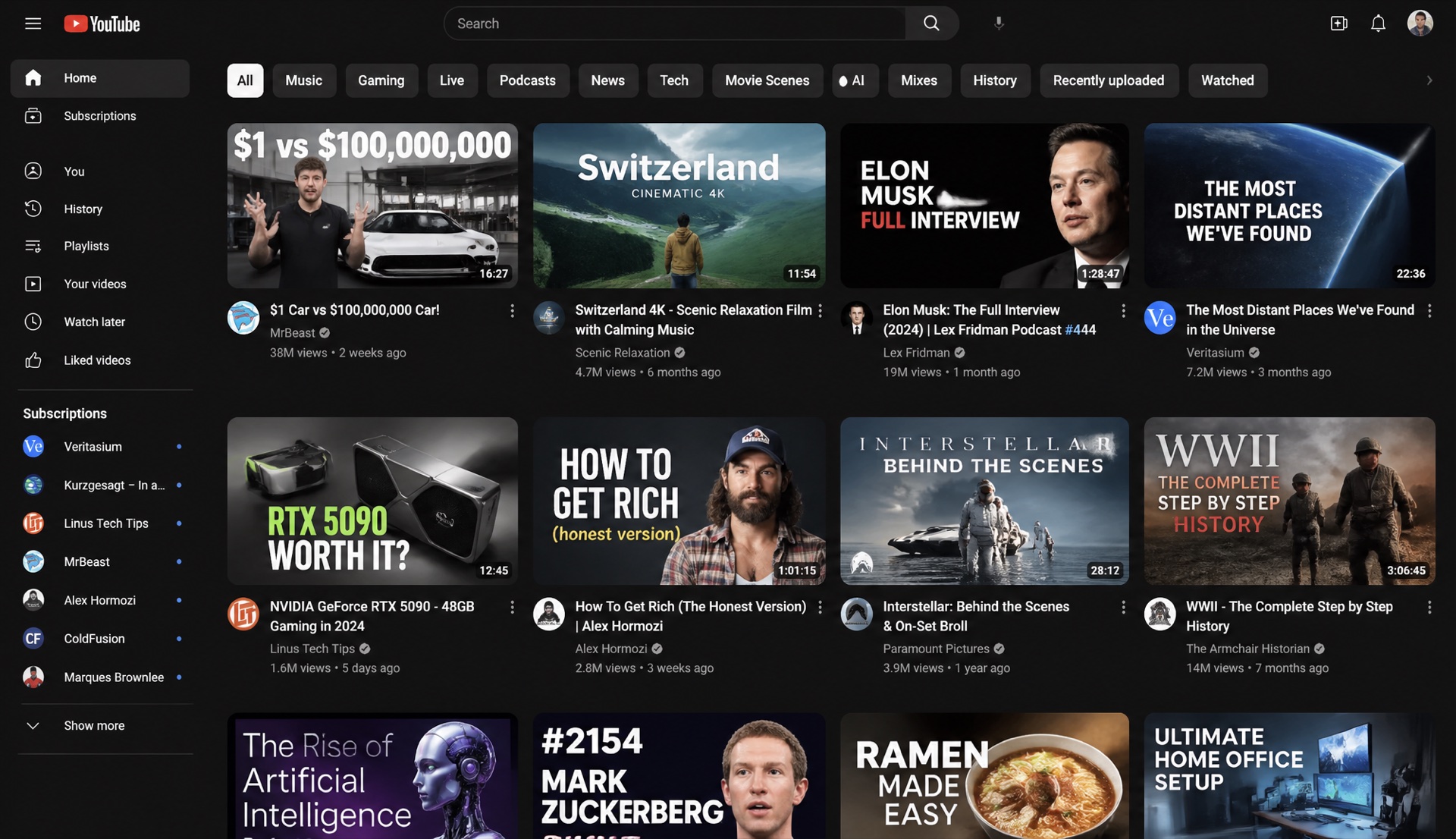

Trend lists show what is moving, but they do not explain which promise, frame, or visual hierarchy fits your next upload.

TUMB listens to the signal, lets the creator and Kimi choose the direction, then edits the supplied image into a thumbnail route ready for review.

The signal window shows what is moving; TUMB turns that movement into a compact decision brief before any image edit starts.

trend -> brief -> image edit · Kimi decision route · GPT-image-2 img-to-img

The problem

TUMB keeps the signal, creative promise, user intent and source image in the same decision path.

Trend lists show what is moving, but they do not explain which promise, frame, or visual hierarchy fits your next upload.

A thumbnail starts in chat, moves to notes, jumps into image generation, then gets rebuilt in an editor. Context leaks at every handoff.

A one-shot render usually ignores the source image, the creator's intent, and the reason the frame should win.

Guided discovery

The user says what they want. Kimi chooses the strongest direction. TUMB starts image editing only after the route is clear.

I have a video about rebuilding a channel from zero. Make it feel risky, honest and readable on mobile.

TUMB asks for the audience, footage, target emotion and any source assets before a render is allowed.

Each route explains the promise, thumbnail composition, copy rule and why that angle should earn attention.

The system can pick the strongest direction when the creator wants speed, but the confirmation gate stays visible.

The brief, title hooks and frame plan travel together into the queue so output stays attached to the original reason.

recommended route

The pipeline

TUMB keeps every stage small enough to review: signal, intent, Kimi route, image edit, variants, recommendation.

signal readout

velocity, repeated hooks, niche pressure

input brief

audience, footage, assets, emotion

creative direction

promise, risk, frame rule, text rule

img-to-img prompt

composition, contrast, object emphasis

thumbnail options

clean differences, not cloned renders

recommendation

best frame, best hook, why it wins

Asset-guided edit

The user brings the source image and intent. Kimi turns that into a clear route. GPT-image-2 performs the img-to-img thumbnail edit so the output stays tied to the original material.

source image

face, product, screenshot or old thumbnail

creator intent

what should feel stronger, clearer or riskier

Kimi route

promise, composition and mobile readability decision

01

upload

02

decide

03

img-to-img

04

review

Keep the original proof, increase contrast, simplify the text area, and return two stronger thumbnail edits for review.

Built for production

Same core loop: describe the video, choose the route, edit the image, review the variants.

solo creators

01turn a rough video idea into a thumbnail/title pack before editing ends

1 approved direction, 4 frames, 8 hooks

channel teams

02keep producers, designers and editors aligned on the same promise

brief, layer notes, export handoff

agencies

03repeat a creative system across multiple niches without cloning the same look

niche atlas, variants, review history

The atlas

packs

13

languages

TR / EN / ES / JP

workflow

intent -> edit

Survival sprint

Cinematic hook

Reaction frame

Editorial calm

Vista hook

Day-in-life split

Habit stack

Studio illustration

Pricing preview

TUMB stays focused on the core production loop: research, decide, generate, edit, ship. Limits can tighten later from real usage.

For solo channels testing titles and thumbnail directions.

$19/mo

For teams shipping more videos with shared project context.

$49/mo

For multi-channel operators that need repeatable creative systems.

Custom

FAQ

The page explains enough of the system to make the product feel concrete without turning the pitch into an engineering spec.

TUMB turns YouTube trend signals into a structured creative brief, then ships titles, thumbnail variants and editable layered packs from a single studio. You describe the video, TUMB writes the route, generates the frame, and hands you something you can still edit.

No. You can use the studio with sample trend data and your own briefs. Connecting live data later unlocks trend ingestion for your channel and competitors while keeping the workflow simple.

Every render lands as PSD-grade layers — subject cutout, headline lockup, accent shapes, background. Swap the face, retype the headline, switch the accent color. Nothing is locked into one flat image.

The Translate module replaces only the text layers and re-typesets headlines so the new language fits the same visual rhythm. Subject placement, palette and grid stay identical, so the TR / EN / ES / JP versions read as one A/B pack instead of four redesigns.

Three plans — Creator, Studio and Agency. Pricing lives one section above this FAQ, and the limits will be tuned as real usage data comes in.